EDUCATION TECHNOLOGY

Post-Test Coach

AI Math Tutors: 99% Accuracy with Solution Injection

How we built an AI math tutor with 99% accuracy using pre-computed solution injection. GPT-3.5 prompt engineering delivers immediate post-test feedback at scale.

THE CHALLENGE

The problem.

Students forget their reasoning within hours of taking a test. Traditional coaching models deliver feedback 48 hours later, when the mental context is gone. Human coaches can't scale to provide immediate, personalized feedback for every student on every problem. This timing gap undermines learning effectiveness.

Partnered with Alpha to build Post-Test Coach, an AI-powered tutoring system that delivers immediate feedback the moment students complete assessments. The challenge wasn't just speed. GPT-3.5 struggles with mathematical reasoning, especially multi-step problems. Off-the-shelf LLMs give wrong answers or skip steps, making them unreliable for education.

GPT-3.5 can't reliably solve multi-step math problems. It hallucinates steps, skips logic, and produces plausible-sounding wrong answers. This isn't acceptable in education where accuracy matters.

We needed 99%+ accuracy to match or exceed human coach consistency. Testing revealed that direct prompting failed on complex problems. The model would get arithmetic right but lose track of algebraic manipulation or skip validation steps.

THE SOLUTION

What we built.

Pre-Computation Module Architecture

The solution: pre-compute correct solutions offline using a dedicated math engine. Store step-by-step breakdowns. Inject these solutions into the LLM's context when coaching students. This transformed the task from "solve this problem" to "guide the student using this known-correct solution."

Built a separate service that processes test questions before students see them. For each problem, the system generates a complete solution path with intermediate steps and reasoning. This happens offline, not during student interaction.

When a student needs coaching, the LLM receives the problem, the student's incorrect answer, and the pre-computed correct solution. The prompt engineering constrains the AI to act as a Socratic tutor, asking guiding questions rather than giving direct answers.

This approach achieved 100% accuracy for pilot questions and 99% accuracy across the broader 4th-8th grade math curriculum. The system now coaches reliably, matching or exceeding human consistency.

Real-Time Integration: From 48 Hours to Seconds

Traditional coaching happens the next day. Teachers review tests, identify struggling students, and schedule follow-up sessions. By then, students have moved on mentally. The reasoning they used during the test is gone.

Integrated directly with Edulastic's API to pull test questions, student answers, and correct answers in real-time. The moment a student submits an assessment, the system triggers coaching. No waiting period. No batch processing.

WebSocket-Based Conversational Architecture

Built the system with separate microservices for speech-to-text, LLM coordination, and frontend rendering. WebSockets enable streaming responses, maintaining a conversational feel with 3-6 second response times.

The backend is stateless, supporting autoscaling as student usage grows. During pilot periods, the system handled concurrent coaching sessions without performance degradation.

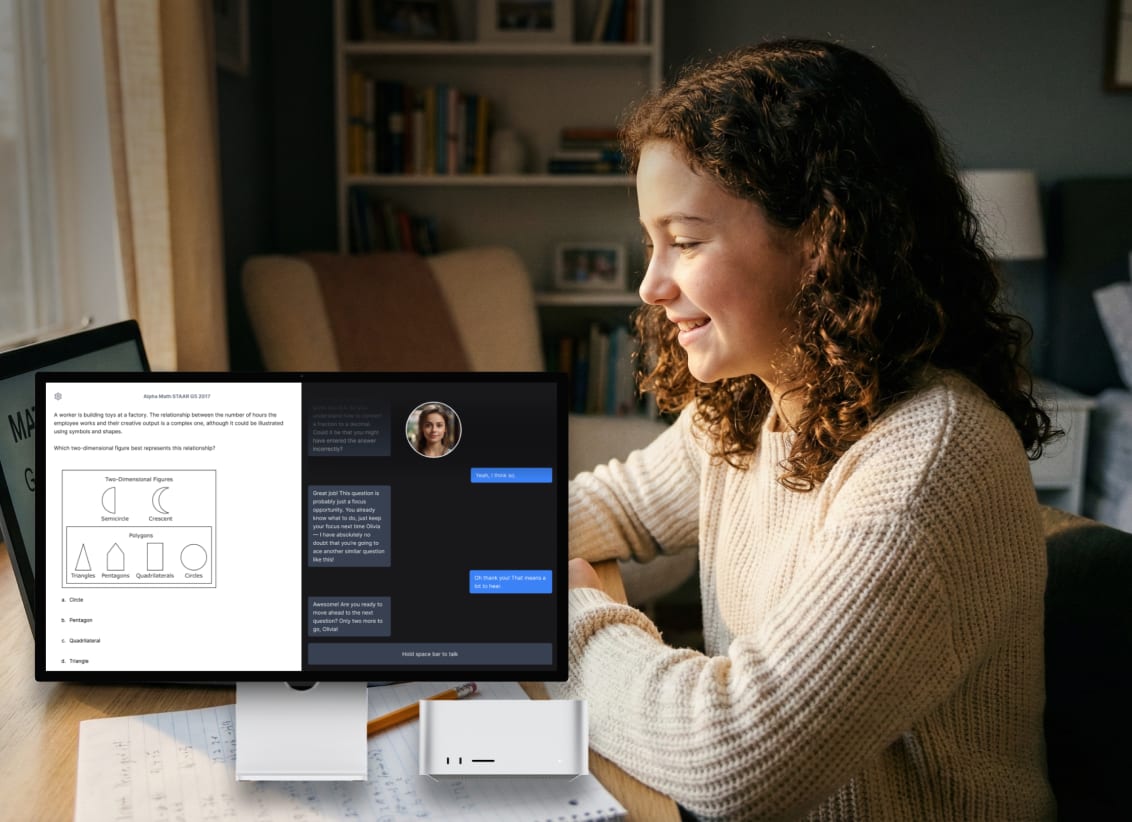

Students engage through voice conversation with a 3D avatar. The avatar provides lip-sync and gamified elements (unlockable avatars) to increase engagement beyond voice-only interaction. User testing showed this visual component required careful design to avoid distraction.

Prompt Engineering for Pedagogical AI

GPT-3.5 wants to give answers. Students need to discover answers through guided questioning. This required extensive prompt engineering to constrain the LLM's behavior.

The system prompt establishes explicit guardrails: never provide direct answers, ask leading questions that reveal reasoning gaps, validate student thinking before moving forward, and break complex problems into smaller conceptual steps.

Socratic Method Implementation

When a student gets a problem wrong, the AI doesn't say "the answer is X." Instead, it asks: "Walk me through your first step. What operation did you use?" If the student made an arithmetic error, the AI guides them to recalculate rather than correcting directly.

This approach required iteration. Early versions were too helpful, essentially giving away answers through leading questions. Later versions were too cryptic, frustrating students. The final prompt balance maintains engagement while ensuring students do the cognitive work.

The pre-computed solution serves as the AI's roadmap. It knows where the student should end up and can recognize when reasoning diverges from the correct path. This enables precise, targeted questions that address the actual conceptual gap.

Student Engagement Monitoring with Computer Vision

Remote learning introduces new challenges. Students can walk away, open other tabs, or disengage without teachers noticing. The system needed to detect these behaviors in real-time.

Built a Vision Processor using computer vision to monitor student engagement through webcam analysis. The system tracks away-from-seat detection, off-screen attention, and other behavioral signals. Achieved 92% accuracy for away-from-seat detection.

Anti-Spoofing and Frame Optimization

Students quickly learn to game monitoring systems. Hold up a photo to the webcam. Play a video loop. These attacks undermine the integrity of engagement data.

Implemented anti-spoofing detection to identify these attempts. The system analyzes frame-to-frame changes and behavioral patterns that distinguish live students from static images or recordings.

Used perceptual hashing (pHash) to optimize processing. The system detects frame changes and skips identical frames, reducing computational load without sacrificing real-time monitoring. This enabled efficient analysis across multiple concurrent student sessions.

Producer-Consumer Architecture for Event Detection

The monitoring system processes multiple event streams in parallel: webcam video, screen capture, and audio analysis. Built a producer-consumer architecture to handle these concurrent streams.

Multiple classifiers achieved F1 scores close to 100% on labeled datasets. Pilot studies showed a 30% decrease in off-task time when students knew the system was monitoring engagement. The presence of monitoring itself changed behavior.

FERPA Compliance and EdTech Security

Educational data requires strict privacy protections. FERPA regulations govern how student information can be stored, processed, and shared. Non-compliance isn't just a legal risk. It destroys trust with schools and parents.

Designed the entire system with FERPA compliance as a core requirement, not an afterthought. Student data is encrypted in transit and at rest. Access controls ensure only authorized personnel can view individual student records.

The real-time API integration with Edulastic required careful data handling. Test questions and student responses flow through the system but aren't stored longer than necessary for coaching. Once a session ends, personally identifiable information is purged according to retention policies.

Built audit logging for all data access. Schools can verify who accessed student data, when, and why. This transparency is essential for maintaining trust in AI-powered educational tools.

HOW IT WORKS

The details.

Solving the Accuracy Problem Before Students Arrive

AI models are unreliable at maths. They sometimes give wrong answers, which is unacceptable in a tutoring tool. We solved this by pre-computing the correct solution to every test question before any student session begins. When a student needs help, the AI already has the right answer in front of it. Its job shifts from solving the problem to guiding the student toward a solution it already knows. This approach achieved 99% accuracy on 4th to 8th grade maths and 100% on the pilot questions.

Feedback in Seconds, Not the Next Day

Traditional coaching happens after teachers have reviewed tests and scheduled follow-up. By then, students have moved on mentally. We connected directly to the test platform's API so that the moment a student submits an assessment, coaching begins. No waiting. The reasoning they used during the test is still fresh when the session starts.

Conversations That Feel Natural

We built the system using separate services for voice input, AI processing, and the on-screen avatar. This keeps response times at 3 to 6 seconds and lets the conversation flow smoothly. Students talk to a 3D avatar that responds in real time. During testing, the visual component required careful design to stay engaging without becoming a distraction.

An AI That Asks Questions Instead of Giving Answers

Left to itself, an AI will just tell students the answer. That does not help them learn. We spent significant time engineering prompts that force the AI to ask guiding questions instead. If a student makes an arithmetic error, the AI asks them to walk through their calculation again rather than correcting them directly. The pre-computed solution acts as the roadmap the AI follows without revealing the destination.

Watching for Students Who Have Walked Away

Remote learning creates a new problem: students can leave, open other tabs, or stop paying attention without anyone noticing. We built a computer vision system that monitors student engagement through the webcam. It detects when a student has left their seat or is looking away from the screen. Tests showed this monitoring alone reduced off-task time by 30% because students knew the system was watching.

Stopping Students From Holding Up a Photo

Students quickly figure out ways to fool monitoring systems, such as holding up a photo or playing a video loop on their phone. We built detection for these attempts by analysing how frames change over time. A live student looks different from a static image. We also skip identical frames to save processing power, which kept the system running in real time without slowing down other applications.

Student Data Protected at Every Step

Educational data requires strict privacy protections. We built the entire system with FERPA compliance as a foundation. Student data is encrypted, access is limited to authorised staff, and personally identifiable information is deleted once a session ends. Schools can see a full audit trail of who accessed student data, when, and why.

OUTCOMES

What shipped.

99% accuracy on 4th-8th grade math problems

100% accuracy on pilot questions

75-97% test score improvements

Feedback delivery reduced from 48 hours to seconds

92% accuracy for away-from-seat detection

30% decrease in off-task time with monitoring

3-6 second response times

F1 scores close to 100% on engagement classifiers

KEY TAKEAWAYS

What we learned.

- Pre-compute solutions offline to overcome LLM mathematical reasoning limitations. Injecting known-correct solutions into prompts transformed GPT-3.5 from unreliable to 99% accurate for 4th-8th grade math.

- Immediate feedback matters more than perfect feedback later. Reducing coaching delivery from 48 hours to seconds enabled students to reflect while reasoning was fresh, directly contributing to 75-97% test score improvements.

- Prompt engineering for pedagogy requires different constraints than prompt engineering for accuracy. The challenge wasn't getting correct answers but teaching the AI to guide students toward discovering answers through Socratic questioning.

- Real-time API integration with assessment platforms enables seamless coaching experiences. Pulling questions and answers directly from Edulastic eliminated manual data entry and enabled instant coaching triggers.

- Computer vision for engagement monitoring changes student behavior even before detection. Pilot studies showed 30% decrease in off-task time when students knew the system was monitoring, demonstrating deterrent effect beyond pure detection.

- Stateless microservice architecture with WebSocket streaming maintains conversational feel while enabling autoscaling. Separating speech-to-text, LLM coordination, and frontend services allowed independent scaling based on load.

- FERPA compliance must be designed in from the start for EdTech systems. Privacy protections, data retention policies, and audit logging aren't optional features but core requirements for educational AI adoption.

IN SUMMARY

Bottom line.

In summary, Post-Test Coach transformed feedback delivery from a 48-hour delay to immediate, personalized coaching at scale. As a result, the pre-computation approach solved the mathematical reasoning problem that makes off-the-shelf LLMs unreliable for education. Pilot results showing 75-97% test score improvements demonstrate that AI tutoring works when engineered correctly.

The education sector's AI adoption jumped from 45% to 86% in one year. Systems like this show why. They don't replace teachers. They augment human educators by providing immediate, consistent, personalized feedback that scales to every student. Furthermore, as LLM capabilities improve, the architectural patterns we developed—pre-computation, solution injection, Socratic prompt engineering—will enable even more sophisticated educational AI systems.

FAQ

Frequently asked.

How did you overcome GPT-3.5's limitations with mathematical reasoning?

What was the response time for the conversational AI tutoring system?

How much did test scores improve for students using the Post-Test Coach?

Why did you use pre-computed solutions instead of real-time LLM calculations?

What was the biggest challenge in making the LLM act as a Socratic tutor?

How do you ensure FERPA compliance with student data?

LET'S TALK

Bring us the hard problem.

We'll bring the team that ships.