NEUROTECHNOLOGY

MoveAgain BCI Platform

Advancing Neural Decoder Performance for Paralysis Patients

Discover how AE Studio partnered with Blackrock Neurotech to optimize the MoveAgain brain-computer interface platform, improving neural decoder accuracy and cross-session robustness for the only FDA-approved implantable BCI device.

THE CHALLENGE

The problem.

Brain-computer interfaces face a fundamental engineering challenge: neural signals drift over time. What worked in yesterday's session may not work today. Patients with paralysis using BCIs need systems that adapt quickly and maintain accuracy across sessions without lengthy recalibration.

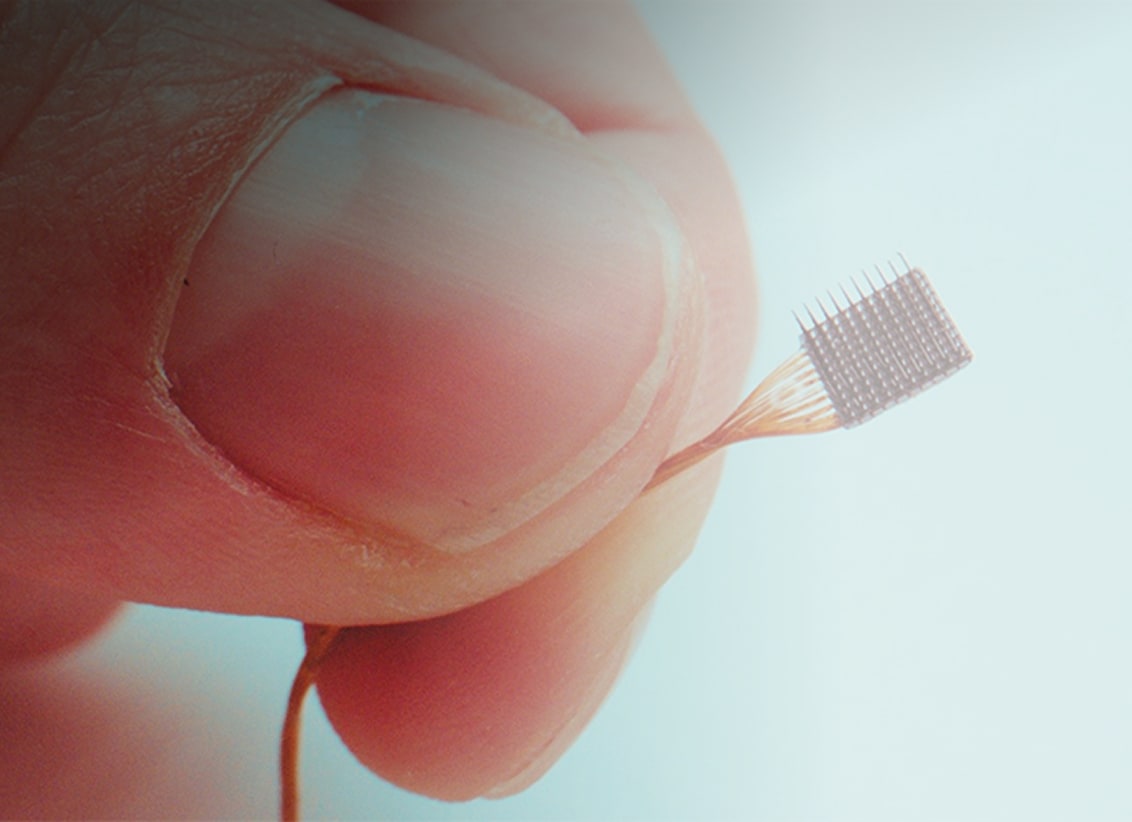

AE Studio partnered with Blackrock Neurotech, creator of the Utah Array, the only FDA-approved implanted BCI device, to advance their MoveAgain platform. MoveAgain enables patients with paralysis to control cursors and devices using their thoughts, but the neural decoders powering the system required optimization for real-world clinical use.

The technical challenges were significant: improving decoder accuracy for cursor movement and click classification, reducing the time required to retrain models between sessions, and building tools that clinical teams could use to analyze sessions without specialized data science support.

THE SOLUTION

What we built.

Self-Supervised Learning for Cross-Session Robustness

The breakthrough came from applying self-supervised learning techniques to neural decoder training. Traditional supervised approaches required extensive labeled data from each session, creating a cold-start problem every time a patient began a new session.

AE Studio demonstrated that self-supervised pre-training across subjects and sessions yielded robust cross-session decoding with improved accuracy. By learning general representations of neural activity patterns before fine-tuning on specific tasks, the decoder could generalize across the natural variability in brain signals, reducing the burden on patients and clinicians while maintaining high performance.

Neural Data Transformer Architecture Optimization

We optimized the Neural Data Transformer (NDT) architecture for the MoveAgain platform. This included systematic hyperparameter tuning for both fine-tuning and pre-training phases, ensuring parameters transferred reliably across runs, sessions, tasks, and subjects.

We also investigated contrastive learning approaches to determine if they could provide additional improvements to performance or robustness compared to existing NDT implementations. Model stabilization methods were developed to minimize retraining time in new sessions while maintaining decoder accuracy.

Real-Time In-Session Analysis Tools

Clinical BCI sessions are expensive and time-constrained. Losing an entire recording due to an undetected hardware issue or environmental noise is costly. We built in-session analysis tools capable of identifying experimental problems in real time.

The tool detected issues including environmental noise, errors in experimental or hardware setup, and unexpected task-locked neural responses, allowing clinical teams to fix problems immediately rather than discovering them during post-session analysis. This transformed session efficiency and data quality.

Automated Multi-Session Analytics Platform

As Blackrock scaled to multiple participants with up to two sessions per month each, manual analysis became unsustainable. We built an automated analytics system that ingested data from MoveAgain sessions, computed relevant metrics, and stored results in a structured database.

The platform provided high-level dashboards for cross-session performance tracking alongside detailed reports for within-session analysis. This reduced time between data acquisition and actionable insights, freeing clinical researchers to focus on interpretation rather than data processing.

- Centralized and automated storage of data, analysis, and trained models across all participants and sessions

- Automated signal quality analysis including temporal and spectral noise characterization and artifact detection

- Behavioral data quantification with velocity and trajectory profiling for cursor control tasks

- Cross-session performance dashboards enabling rapid comparison of decoder accuracy across the participant's measurement history

Production Integration with Rust Development

Research findings needed to translate into the production MoveAgain platform. AE Studio's Rust developers extended the MoveAgain codebase to support self-supervised and pre-trained models, including adding a linear decoder and updating the NDT architecture.

This enabled closed-loop calibration improvements and brought research-demonstrated performance gains into the hands of patients. We also supported documentation and requirements generation for FDA compliance, leveraging our experience with both the research Python codebase and production Rust implementation.

HOW IT WORKS

The details.

Teaching the Decoder to Generalise Across Sessions

The core problem was that a decoder trained on one session would not work well in the next. Every time a patient sat down, the system was starting from scratch. We applied a learning approach where the model first learns general patterns across many subjects and sessions before being fine-tuned for a specific task. This meant the decoder already had a strong foundation each time it adapted to a new session, reducing the burden on patients and clinicians.

Improving the Architecture Systematically

We worked through the underlying model architecture in detail, tuning the settings that control how it trains and how it transfers what it has learned between sessions. We also tested alternative learning approaches to see if they could improve reliability or speed. The goal was a decoder that worked consistently across the natural variability in brain signals, not just under ideal conditions.

Catching Problems During the Session, Not After

Clinical sessions are expensive and hard to reschedule. Losing an entire recording because of a hardware issue discovered after the fact is a costly mistake. We built tools that monitor the session in real time and flag problems as they happen: environmental noise, equipment errors, and unexpected signal patterns. Clinical teams could fix issues on the spot rather than finding out the data was unusable the next day.

Automating What Used to Take Hours of Manual Work

As Blackrock worked with more participants across more sessions, reviewing each one manually became unsustainable. We built an automated analytics system that processes session data, computes standard metrics, and stores results in a structured database. Dashboards let the clinical team compare performance across sessions and participants at a glance. Detailed reports are available for any session that needs closer inspection.

- Centralised storage of data, analysis results, and trained models across all participants and sessions

- Automated signal quality checks for noise, interference, and data anomalies

- Cursor control behaviour profiling including speed and path accuracy

- Cross-session dashboards for tracking decoder performance over time

Bringing Research Findings Into the Real Product

Demonstrating improvements in a research environment is only half the work. We extended the production version of the MoveAgain platform to support the new model types we had developed. This brought the performance gains into the hands of patients. We also supported the documentation required for FDA compliance, working across both the research codebase and the production software.

OUTCOMES

What shipped.

First FDA-approved implantable BCI platform supported

Superior cross-session decoder performance demonstrated

Reduced retraining time between sessions

Real-time in-session issue detection

Automated multi-session analytics platform

20+ concurrent behavioral detectors supported

Support for 2 participants with bi-monthly sessions

Python to Rust production implementation

HIPAA-compliant data handling

KEY TAKEAWAYS

What we learned.

- Self-supervised pre-training improves BCI decoder robustness. By learning general neural activity representations before task-specific fine-tuning, models generalize better across sessions and reduce patient burden during calibration.

- Real-time session monitoring prevents data loss. In-session tools that detect hardware issues, environmental noise, and setup errors immediately save costly clinical recordings that would otherwise be lost to post-hoc discovery.

- Automated analytics scale clinical BCI research. As participant numbers grow, manual analysis becomes a bottleneck. Centralized data pipelines with automated quality metrics and cross-session dashboards enable faster clinical iteration.

- Research-to-production translation requires dual expertise. Optimizing algorithms in Python research environments is only half the challenge, production deployment in Rust for embedded systems requires teams that span both domains.

- Clinical neurotechnology demands rigorous data handling. FDA compliance, HIPAA requirements, and patient privacy necessitate secure pipelines and transparent collaboration between research and clinical teams.

IN SUMMARY

Bottom line.

In summary, AE Studio's partnership with Blackrock Neurotech demonstrates how applied machine learning and production software engineering can advance clinical brain-computer interfaces. As a result, by combining self-supervised learning techniques, real-time analysis tools, and automated analytics platforms, we helped extend the capabilities of the MoveAgain platform, bringing improved decoder performance and clinical usability to patients with paralysis. Furthermore, this collaboration exemplifies AE Studio's mission: applying cutting-edge AI and software development to neurotechnology that genuinely improves human lives.

FAQ

Frequently asked.

What is the MoveAgain platform and why is it significant?

How did self-supervised learning improve the neural decoder performance?

What types of issues did the in-session analysis tools detect?

How did AE Studio ensure HIPAA compliance and data security for clinical neural data?

Why was Rust used for the production implementation instead of Python?

What is the Neural Data Transformer (NDT) and how was it optimized?

How does this work relate to AE Studio's broader BCI expertise?

What was the typical development timeline for the different project components?

LET'S TALK

Bring us the hard problem.

We'll bring the team that ships.